Why exactly did Intel scrap its asynchronous chip, and what’s changed since then?

Non-mainstream technologies can offer advantages over more commonly used approaches, but usually at some additional cost (otherwise they’d probably be mainstream). The additional cost could be in design time, area, testability or whatever, and it might even be only a temporary disadvantage. If comparable time and energy were invested in the new technology, perhaps the additional costs would disappear. For example silicon wafers are still often used even when other devices built from III-V materials are fabricated on top.

There’s been a lot of knowledge gained over the decades on handling silicon and how it behaves under many different environments. How about the “QWERTY” keyboard, which was purposely designed to slow down typists so that the keys wouldn’t jam? Surely there are better keyboard layouts, but once something becomes entrenched it can be hard to displace it.

Back in the mid 1990s Intel had an asynchronous project to build a Pentium for comparison purposes. What were the results? Intel’s clockless prototype in 1997 ran three times faster than the conventional-chip equivalent, on half the power. (See Claire Tristram’s article here for more information.) Now, three times faster at half the power sounds pretty compelling. What stopped Intel from commercializing these clockless designs? To get a better insight into what’s happening in the field of asynchronous design, I attended the recent Async 2015 in Mountain View, Calif.

The first day of the conference was billed as an “Industry Day” with topics chosen to suit companies that are likely doing synchronous digital design with an interest toward learning more about asynchronous design. Essentially, in a good asynchronous design it’s possible to get the benefits of clock-gating, operand isolation and “useful” skew for “free” along with glitch-free operation, no clock tree power and better resiliency to PVT variation. (See earlier articles Making Waves in Low-Power Design and The Power of Logic.) Even back in the mid-90s, designers were concerned about the power being used by clock-tree circuitry and that managing skew and jitter at higher frequencies would likely worsen the situation. In large part, designers have reined in some of these issues by using clock gating and splitting their chips up into multiple clock domains, so that one large clock isn’t driving the whole chip. Chris Kwok from Mentor Graphics mentioned during his presentation that they’ve seen chips designed with more than 150 independent clock domains, more than 10 reset domains, and more than 10 power domains and voltage domains.

A paper presented by L-C Trudeau et. al., representing École de Technologie Supérieure and Octasic Inc., both in Montréal, Canada, reported on a novel Low-Latency, Energy-Efficient L1 CacheBased on a Self-Timed Pipeline that was greater than 22% more energy-efficient with an access time that was reduced by more than 25% compared with the standard synchronous approach. Work like this may help designers at least incorporate useful asynchronous portions into their synchronous designs.

Work by Jerome Cox et. al. on an area of importance considering metastability and performance for asynchronous designs using synchronizer flip-flops was presented by David Zar from Blendics. The team showed how the requirements for synchronizers and data flip-flops are different and analyzed gain-bandwidth (GBW) for improving the performance of synchronizer flip-flops in asynchronous designs.

Peter Beerel has been working in the field of asynchronous design for years, co-founding Timeless Design Automation. Teams he’s working with at USC in Los Angeles, and universities in Porte Alegre and Santa Cruz do Sul in Brazil, and Beijing, China, had two papers presented by Dylan Hand on Blade. I view Blade as sort of like Razor (another delay error fault recovery scheme) on steroids. The idea is to try to win back most of the margining incorporated into digital design methodologies due to worst case delay paths and variability as shown in Figure 1 below from their presentation.

Figure 1. Delay Overheads in Typical Digital Designs

Blade uses two types of reconfigurable delay lines, shown in Figure 2, to enable their designs to run closer to the average delay rather than at a margined worst case delay. There’s a “standard” δ delay line and then an additional “resiliency” Δ delay line to stall latching when needed. The authors reported significantly improved results on a MIPS OpenCore design compared to Razor and standard synchronous implementations.

Figure 2. Addition of the Resiliency Window in Controller

The conference dinner was held on a Tuesday evening and Paul Cunningham and Steev Wilcox, both from Cadence but formerly at Azuro, gave the after dinner keynote address: A Random Walk from Async to Sync. The talk was quite interesting and simultaneously entertaining. Cunningham founded Azuro with the intention of bringing asynchronous design to the mainstream. As they talked with more customers and began to realize the monumental task that they had undertaken, the company’s goals shifted to more closely align with the present needs of their digital design customers, eventually becoming an industry leader in clock-tree synthesis and then selling the company to Cadence.

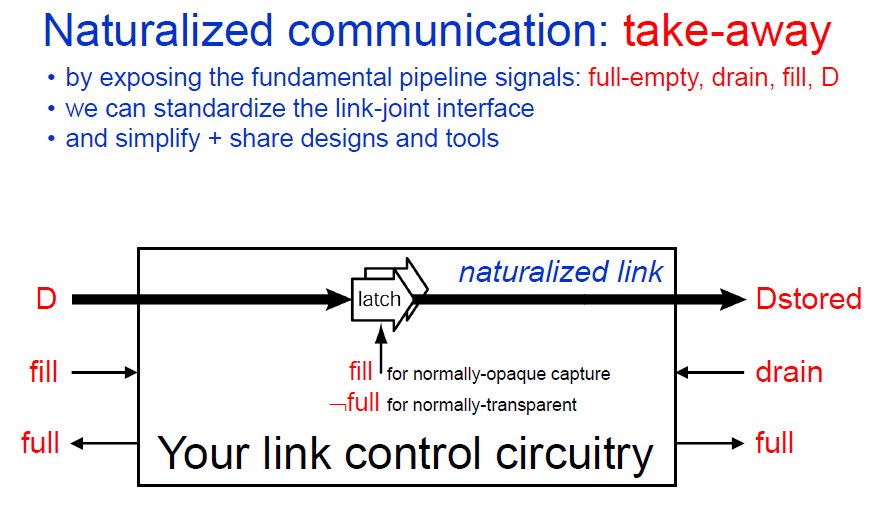

Testability has been an issue for asynchronous design, and Marly Roncken from Portland State University gave a presentation on Naturalized Communication and Testing. She described a methodology where asynchronous pipeline stages are partitioned into links and joints, exposing fundamental pipeline signals: full-empty, drain, fill and Data, and then control circuitry (GO) is incorporated into the joint to create testable designs. The team even had demonstration boards at the conference.

Figure 3. Naturalized Communication Structure for Asynchronous Testability

An interesting paper listed under “Fresh Ideas” was presented by Montek Singh entitled, IMAGIN: A Frameless HDR Camera. This work was performed by his team at the University of North Carolina and explores a novel approach to capturing good images that may have extremes in lighting conditions across them for example, a picture of someone standing inside a room next to a bright window to the outdoors. Today most approaches involve multiple exposures (pictures), which then use a large amount of computation to stitch the multiple images back into one. In contrast, the approach taken here is to time how long it takes each sensor to get to “full”, instead of counting how many times the sensor “bucket” fills during any given exposure period. This also can lead to a “frameless” imaging system as the sensors can asynchronously update as new time values arrive.

The best paper award went to the team of E. Zianbetov, E. Beigné, and G. Di Pendina out of Grenoble for their paper, Non-Volatility For Ultra-Low Power Asynchronous Circuits in Hybrid CMOS/Magnetic Technology, which describes the creation of a non-volatile C-elements using MRAM. The non-volatile storage adds yet another interesting characteristic on top of the already robust nature of many asynchronous designs.

So back to our earlier question, why did Intel scrap its asynchronous chip? The cost for such a “radical” change in methodology was just considered too high. From Claire Tristam’s article, “If you get three times the power going with an asynchronous design, but it takes you five times as long to get to the market—well, you lose,” said Intel senior scientist Ken Stevens, who worked on the 1997 asynchronous project. “It’s not enough to be a visionary, or to say how great this technology is. It all comes back to whether you can make it fast enough, and cheaply enough, and whether you can keep doing it year after year.”

There’s been a lot of time, effort and money invested in synchronous digital design. Once upon a time it was considered crazy to put any logic on clock signals, and now clock-gating is a commonly used synchronous design technique. It’s quite possible that concepts like Blade will start to work their way into more designs. Whole new application spaces that are being opened under the guise of the Internet of Things are very energy-sensitive, and asynchronous design may just find some new inroads. As more constraints are piled on as we move to smaller feature sizes, the benefits may start to outweigh the costs. Is it time yet?

Leave a Reply