What’s needed for performing inference efficiently in low/mid-end edge devices.

By Pieter van der Wolf and Dmitry Zakharov

Increasingly, machine learning (ML) is being used to build devices with advanced functionalities. These devices apply machine learning technology that has been trained to recognize certain complex patterns from data captured by one or more sensors, such as voice commands captured by a microphone, and then performs an appropriate action. For example, when the voice command “play music” is recognized, a smart speaker can initiate the playback of a song. Machine learning is the capability of algorithms to learn without being explicitly programmed. This article discusses machine learning inference, which is the process of taking a model that has already been trained and using it to make useful predictions for processing input data captured by sensors to infer the complex patterns it has been trained to recognize. We’ll consider requirements for the efficient implementation of machine learning inference to build smart IoT edge devices.

Requirements for low-power machine learning inference for IoT

IoT edge devices that employ machine learning inference typically perform different types of processing, as shown in Figure 1.

Figure 1. Different types of processing in machine learning inference applications.

These devices typically perform some pre-processing and feature extraction on the sensor input data before doing the actual neural network processing for the trained model. For example, a smart speaker with voice control capabilities may first pre-process the voice signal by performing acoustic echo cancellation and multi-microphone beam-forming. Then it may apply FFTs to extract the spectral features for use in the neural network processing, trained to recognize a vocabulary of voice commands.

For each layer in a neural network, the input data must be transformed into output data. An often used transformation is the convolution, which convolves, or more precisely correlates, the input data with a set of trained weights. This transformation is used in convolutional neural networks (CNNs), which are often applied in image or video recognition. Recurrent neural networks (RNNs) are a different kind of neural network that maintain state while processing sequences of inputs. As a result, RNNs also have the ability to recognize patterns across time. RNNs are often applied in text and speech recognition applications. The key operation in these networks is the dot-product operation on input samples and weights. It is, therefore, a requirement for a processor to implement such dot-product operations efficiently. This involves efficient computation, e.g. using multiply-accumulate (MAC) instructions, as well as efficient access to input data, weight kernels, and output data.

Implementation requirements

Memory requirements for low/mid-end machine learning inference are typically modest, thanks to limited input data rates and the use of neural networks with limited complexity. Input and output maps often are of limited size, i.e. a few 10s of kBytes or less, and the number and size of the weight kernels are also relatively small. Using the smallest possible data types for input maps, output maps and weight kernels helps to reduce memory requirements.

Low/mid-end machine learning inference applications require the following types of processing:

The different types of processing may be implemented using a heterogeneous multi-processor architecture, with different types of processors to satisfy the different processing requirements. However, for low/mid-end machine learning inference, the total compute requirements are often limited and can be handled by a single processor running at a reasonable frequency, provided it has the right capabilities. Using a single processor eliminates the area overheads and communication overheads associated with multi-processor architectures. It also simplifies software development, as a single toolchain can be used for the complete application. However, it requires that the processor can perform the different types of processing, i.e. DSP, neural network processing, and control processing, with excellent cycle efficiency.

Processor capabilities for low-power machine learning inference

Selecting the right processor is key to achieving high efficiency for the implementation of low/mid-end machine learning inference. Specifically, having the right processor capabilities for neural network processing can be the difference between meeting low MHz requirements and, hence, low power consumption, or not. Synopsys’ DSP-enhanced ARC EM9D processor offers some key capabilities that can be used to implement neural network processing efficiently.

The dot-product operation on input samples and weights is a dominant computation, and is implemented using a the multiply-accumulate (MAC) operation, which can be used to incrementally sum up the products of input samples and weights. Vectorization of the MAC operations is an important way to increase the efficiency of neural network processing. Figure 2 illustrates two types of vector MAC instructions of the ARC EM9D processor.

Figure 2. Two types of vector MAC instructions of the ARC EM9D processor

Both of these vector MAC instructions operate on 2×16-bit vector operands. The DMAC instruction on the left is a dual-MAC that can be used to implement a dot-product, with A1 and A2 being two neighboring samples from the input map and B1 and B2 being two neighboring weights from the weight kernel. The ARC EM9D processor supports 32-bit accumulators for which an additional eight guard bits can be enabled to avoid overflow. The DMAC operation can effectively be used for weight kernels with an even width, reducing the number of MAC instructions by a factor of two compared to a scalar implementation. However, for weight kernels with an odd width this instruction is less effective. In such cases the VMAC (vector-MAC) instruction, shown on the right in Figure 2, can be used to perform two dot-product operations in parallel, accumulating intermediate results into two accumulators. In case the weight kernel ‘moves’ over the input map with a stride of one, A1 and A2 are two neighboring samples from the input map and the value of B1 and B2 is the same weight being applied to both A1 and A2.

Efficient execution of the dot-product operations also requires sufficient memory bandwidth to feed operands to the MAC instructions as well as ways to avoid overheads for performing address updates, data size conversions, etc. For these purposes, the ARC EM9D processor provides XY memory with advanced address generation. The XY memory provides up to three logical memories that the processor can access concurrently, as illustrated in Figure 3. The processor can access memory through a regular load or store instruction or it can enable a functional unit to perform memory accesses through address generation units (AGUs). An AGU can be set up with an address pointer to data in one of the memories and a prescription, or modifier, to update this address pointer in a particular way when a data access is performed through the AGU. After set-up, the AGUs can be used in instructions for directly accessing operands and storing results from/to memory. No explicit load or store instructions need to be executed for these operands and results, yielding lower cycle counts for the dot-product operations.

Figure 3. ARC EM9D processor with XY memory and address generations units (AGUs)

The processor offers a broad set of DSP capabilities that enable the efficient implementation of other functions, such as pre-processing and feature extraction (e.g. FFTs). These capabilities include:

The combination of neural network processing, DSP and efficient control processing capabilities makes the ARC EM9D well-suited to implement complete low/mid-end machine learning inference applications on a single processor.

A software library for machine learning inference

After selecting the right processor, the next question is how to arrive at an efficient software implementation of the targeted machine learning inference application.

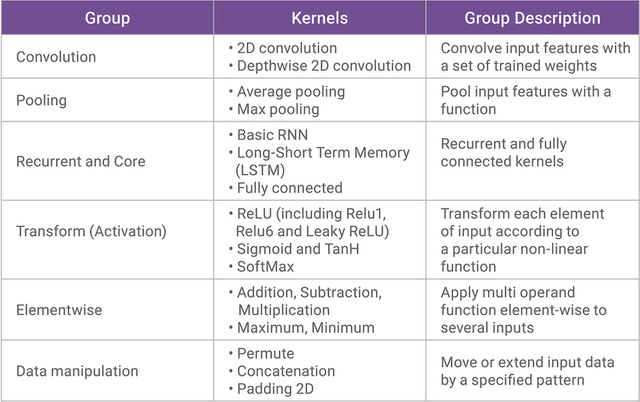

The embARC Machine Learning Inference (MLI) library is a set of C functions and associated data structures for building neural networks. Library functions, also called kernels, implement the processing associated with a complete layer in a neural network, with multidimensional input/output maps represented as tensor structures. Thus, a neural network graph can be implemented as a series of MLI function calls. Table 1 lists the currently supported MLI kernels, organized in six groups. For each kernel there can be multiple functions in the library, including functions specifically optimized to support particular data types, weight kernel sizes, and strides. Additionally, helper functions are provided; these include operations like data type conversion, data pointing, and other operations not directly involved in the kernel functionality.

Table 1. Supported kernels in the embARC MLI library

Conclusion

Increasingly, Smart IoT devices that interact intelligently with their users apply machine learning technology for processing captured sensor data, so that smart actions can be taken based on recognized patterns The ARC EM9D processor offers several capabilities, such as vector MAC instructions and XY memory with advanced address generation units, that are key to the efficient implementation of machine learning inference engines. Together with its other DSP and control processing capabilities, the ARC EM9D is a universal core for low-power IoT applications. The complete and highly optimized embARC MLI library makes effective use of the ARC EM9D processor to efficiently support a wide range of low/mid-end machine learning applications.

To learn more, read the white paper “Say Welcome to the Machine – Low-Power Machine Learning for Smart IoT Applications. ”

Pieter van der Wolf is a principal R&D engineer at Synopsys.

Dmitry Zakharov is a senior software engineer at Synopsys.

Leave a Reply